|

|

Post by permutojoe on Apr 15, 2017 20:51:10 GMT

What if the universe as we know it is a virtual reality where only what we perceive is rendered, especially video-wise? From a computing power standpoint, aren't we pretty close to this if not easily there already?

|

|

|

|

Post by permutojoe on Apr 16, 2017 2:23:45 GMT

How close is 4k resolution to typical human vision? Anyone know?

|

|

Deleted

Deleted Member

@Deleted

Posts: 0

Likes:

|

Post by Deleted on Apr 16, 2017 3:05:36 GMT

|

|

|

|

Post by sugarbiscuits on Apr 16, 2017 6:43:16 GMT

Like in the movie the 13th floor?

The lead finds out he's living In a simulated world.

|

|

|

|

Post by general313 on Apr 17, 2017 14:59:47 GMT

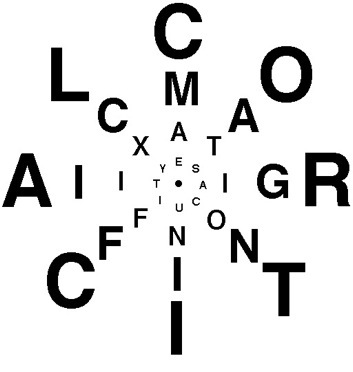

This Wikipedia article says there are 120 million rods and 6 million cones. en.wikipedia.org/wiki/Photoreceptor_cell#HumansThat would place an upper limit of 120 megapixels (black and white). The photoreceptors are not evenly distributed though, they are much denser in the middle (fovea). The area of sharp focus in the visual field is quite tiny, with very low resolution peripheral vision, and the eye compensates for this by constantly darting around. This image gives an idea.  |

|

|

|

Post by general313 on Apr 17, 2017 15:05:05 GMT

What if the universe as we know it is a virtual reality where only what we perceive is rendered, especially video-wise? From a computing power standpoint, aren't we pretty close to this if not easily there already? Besides rendering, one must model (simulate) that which is seen. For static scenery that's not a big deal, but things like moving water (falls, surf) are more computationally demanding (that's why you don't see so much of them in video games). I think in general the modeling problem is much greater than the rendering problem. Animals would be even more challenging. So would animals be considered part of the simulation? Or are they part of the audience? |

|

|

|

Post by permutojoe on Apr 18, 2017 2:06:22 GMT

I found a site that said that too, but it also said like only 7 of those MP are really important.

My understanding is there will be nano-computers that are put in the brain and are able to interact with neurons and such. If I'm not mistaken this is already happening to limited extent in the treatment of Parkinson's disease. It's a different topic but very interesting.

|

|

|

|

Post by permutojoe on Apr 18, 2017 2:16:46 GMT

What if the universe as we know it is a virtual reality where only what we perceive is rendered, especially video-wise? From a computing power standpoint, aren't we pretty close to this if not easily there already? Besides rendering, one must model (simulate) that which is seen. For static scenery that's not a big deal, but things like moving water (falls, surf) are more computationally demanding (that's why you don't see so much of them in video games). I think in general the modeling problem is much greater than the rendering problem. Animals would be even more challenging. So would animals be considered part of the simulation? Or are they part of the audience? Yes I suppose modelling of the entire universe would need to happen to some degree, to greater extent for points that are closer to the participant. There could be very clever algorithms though that might do this effectively while minimizing computer power needed. Probably but perhaps not positively. |

|

|

|

Post by general313 on Apr 18, 2017 14:54:16 GMT

Besides rendering, one must model (simulate) that which is seen. For static scenery that's not a big deal, but things like moving water (falls, surf) are more computationally demanding (that's why you don't see so much of them in video games). I think in general the modeling problem is much greater than the rendering problem. Animals would be even more challenging. So would animals be considered part of the simulation? Or are they part of the audience? Yes I suppose modelling of the entire universe would need to happen to some degree, to greater extent for points that are closer to the participant. There could be very clever algorithms though that might do this effectively while minimizing computer power needed. Interesting. It might be possible to have a progressive refinement combined with lazy evaluation of the areas, evaluating to high precision and deep levels only where detail is needed, with less detail outside the areas of interest, just enough so that overall continuity in the system is maintained. Maybe some of the peculiarities of quantum physics (especially as described by the Copenhagen interpretation) could be explained with a model of the universe that works like that. My natural leanings are not toward that interpretation, and also not toward a non-materialistic view of reality, but it is nonetheless an intriguing thought. |

|

|

|

Post by permutojoe on Apr 20, 2017 0:18:27 GMT

I think you can reasonably go a lot further than that. If you consider Grand Theft Auto, nothing except what you're currently perceiving and can perceive in the next x unit of time where x is dependent on processing speed of the system, need be simulated.

|

|